Predictive Accounting Solutions

Predictive Accounting on Aito

Turn Your Accounting Product into a Predictive Application

A predictive application is a SaaS product where every form, queue, and dashboard is backed by live predictions instead of hard-coded rules. Predictive accounting means GL codes that auto-fill, approvers that route themselves, bank transactions that match invoices automatically, and anomalies that surface before posting — every prediction explained, every override learned from.

Aito is the predictive database underneath. No model training, no MLOps, no data scientist on staff. Add a row, the next prediction reflects it. Aito has run AP automation at 95%+ accuracy in Nordic enterprise production since 2018, processing thousands of invoices per month.

Live Reference: accounting.aito.ai

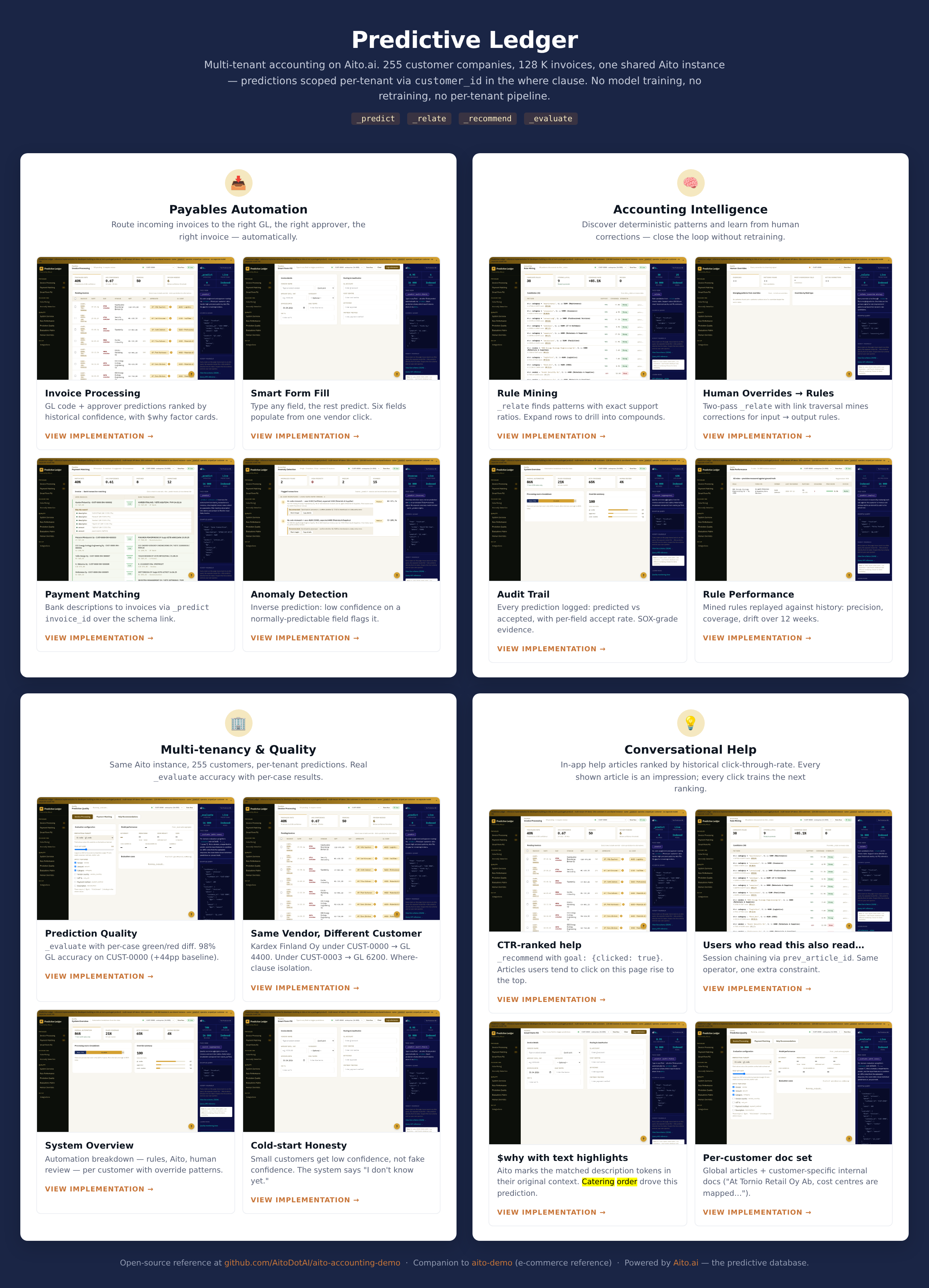

accounting.aito.ai is our open-source reference implementation of a multi-tenant predictive accounting SaaS — 255 customer companies, 128K invoices, all served from a single Aito instance with customer_id in the where clause. Same pattern you'd build on top of Aito for your own accounting product.

Explore the Use Cases → · Try the Live Demo → · Source on GitHub →

Nine predictive features, all from SQL-like queries:

- 🧾 Invoice processing — GL code, approver, payment method, cost center predictions per invoice with

$whyfactor decomposition. - 🪄 Smart form fill — Type any field, the rest predict. Vendor → fills GL, approver, cost centre, VAT %.

- 🏦 Payment matching — Bank transactions matched to invoices via schema link in a single

_predict invoice_idcall. - 🧠 Rule mining — Discover statistical patterns (

category=telecom & gl_code=6200 → approver=Timo, 15.8× lift) and promote them to rules with audit trail. - 🚨 Anomaly detection — Inverse prediction: if the actual GL isn't in top-3 of predicted, flag it for review.

- 💡 Help drawer — In-app help articles ranked by

_recommendagainst click history (CTR-ranked, like product recommendations). - 📊 Quality dashboard — Real

_evaluateaccuracy on held-out samples, with per-case green/red diff vs. always-predict-majority baseline. - ✋ Human overrides — Two-pass

_relatemines correction patterns into promotable rules. - 🏢 Multi-tenancy — One Aito table, 255 tenants. Same vendor, different

customer_id→ different prediction. No per-tenant infra.

Every prediction returns confidence, top-3 alternatives, and a $why decomposition with token-level highlighting — the same building blocks you'd use to ship explainable predictions in your own product.

How Much Can You Automate? (The Honest Answer)

Two things are true at once.

The verifiable facts. Aito has run AP automation at 95%+ accuracy in Nordic enterprise production since 2018, on SAP + UiPath + Aito stacks — same operators you'd use. Industry-wide, automated AP error rates land in the 0.1-0.8% range when properly configured. Best-in-class AP teams hit 60-80% touchless processing rates and process invoices at $2.78 each (vs. $5-10 manual). Top performers achieve 3.1-day cycle times vs. 14.6 days manual. These are real, citable numbers, not aspirations.

The conditional answer. All accounting processes are super-regular at their core — the consistency principle, procurement contracts, and chart-of-accounts taxonomies see to that. What differs between customers is how much of your incoming transaction volume matches prior patterns vs. arrives as a first-time pattern. Predictive accounting is a conditional-probability engine that works the same way on each transaction; how many of your transactions benefit from it depends on the volume mix.

Why accounting data is unusually predictable to begin with

Before the variance, the structural point most ML pitches miss: accounting data is more regular than typical ML training data, by law and by contract.

The GAAP consistency principle and the equivalent IFRS rules require companies to apply the same accounting methods consistently from period to period. That's a legal and audit requirement, not best practice. Same vendor invoicing the same company in two consecutive months → presumed to hit the same GL account unless there's a documented reason otherwise. Procurement contracts and chart-of-accounts taxonomies lock the mapping further.

This is why on regular accounting data, three observations of a stable multi-field pattern produces ~90% confidence with realized error around 1%. By five observations, error rates well under 1% are typical. The top confidence bucket (>95%) carries near-zero realized error in production — Aito's confidence is calibrated, so when it says >95%, the realized rate matches.

Three pattern-repetition profiles, three different ranges

Underneath all three, the process is the same — same coding logic, same conditional-probability math, same calibrated confidence. What changes between deployments is the volume mix: how much of the incoming transaction stream lands on patterns with ≥3 prior examples vs. arrives as a first-time pattern.

| Profile | Examples | What the volume mix looks like | Range we typically see |

|---|---|---|---|

| Mostly-recurring | Recurring B2B AP (utilities, telco, scheduled supplier payments); sales invoicing; bank reconciliation; PO-backed direct procurement; period-end JV automation | Stable customer/supplier base, same vendors invoicing the same accounts every month — the bulk of incoming transactions match patterns already seen many times | Near the top of industry benchmarks — 70-90%+ touchless; 95%+ accuracy seen in Nordic enterprise AP production |

| Mixed | Typical mid-market AP at a mature tenant; industrial maintenance with stable supplier base; multi-channel retail buying | Combination of repeat-pattern transactions and one-offs; meaningful share of project-specific spend or new-supplier onboardings each month | Mid-tier of industry benchmarks — 50-70% touchless on common configurations; Q-Automate has reported 80% GL automation at Jokiväri on Lemonsoft, on the higher end |

| Mostly-snowflake | Employee-expense AP (business travel, restaurants, taxis, ad-hoc reimbursements); construction project AP with subcontractor sprawl; professional services with project-specific passthroughs | People-driven or project-driven spend by construction — many incoming transactions are first-time patterns (new vendor, new combination). The coding logic is just as regular; the share of incoming transactions that match prior patterns is low | Headline touchless rate lower — we've seen ranges in the 20-40% area; field-level prediction still produces 3-4 of 5 fields pre-filled per invoice with $why, so manual-effort reduction is much larger than the touchless headline implies |

The conditional-probability machinery is the same across all three profiles. Three observations of any stable pattern produces ~90% confidence with ~1% realized error, regardless of profile. What differs is how many of your incoming transactions land on patterns with ≥3 prior examples vs. arrive as novel ones.

Find your profile in 15 minutes: run _evaluate on a held-out slice of your real data. Per-field accuracy and per-confidence-bucket calibration tell you which range your tenants sit in. Cheaper than a procurement cycle.

Why three examples is enough on regular data

Where consistency rules apply, accounting data isn't noisy. The same supplier maps to the same GL 95%+ of the time; the same vendor × category routes to the same approver. The conditional probability collapses fast because there's not much disagreement to average over.

| Repetitions of a stable pattern | Top-1 confidence | Typical realized error |

|---|---|---|

| 0 | base rate ~5-15% — "no prediction yet" | n/a |

| 1 | ~50-70% — single suggestion | high |

| 3 | ~85-92% — auto-fill territory | often ~1% on regular data |

| 5 | ~92-95%+ | well under 1%, approaching the calibration floor |

| 10+ | at the drift ceiling | calibrated to confidence; near zero in the top bucket |

Not every field is equally stable, even on regular workloads

A subtle but important distinction: even when the workload is regular, not every field is equally stable. The split matters because it tells you which predictions need to be more reactive to recent overrides.

| Field type | Stability | Why |

|---|---|---|

| GL account, cost center, expense category, VAT % | High — long-term stable | Locked by chart-of-accounts taxonomy and consistency principle. Same vendor → same account year over year. |

| Payment terms, payment method | High — derived from amount, country, category | Not vendor- or person-dependent. |

| Approver / processor / reviewer | Medium — changes with personnel | Vacations, role changes, team restructuring break recurring person-keyed patterns. |

| One-off vendor accounts (snowflake tail) | Low by construction | No prior history to learn from; vendor-locked fields require human judgement. |

Two practical implications: (a) person-keyed predictions need to be more reactive to recent overrides than account-keyed predictions — handled naturally because every override is the next prediction's training signal; (b) chart-of-accounts reorganizations are documented events, so a brief drop in account-keyed accuracy after a reorg is expected and recoverable within ~3 observations of the new mapping.

The snowflake tail and what still works there

Purchase invoices in particular carry a heavier snowflake tail than other accounting workloads. Employee business trips alone generate transportation, accommodation, restaurant, and taxi receipts that may never repeat in the same tenant. Statistical snowflakes by construction. No amount of data resolves them.

The honest answer: vendor-keyed predictions don't work on the snowflake tail. A vendor seen for the first time has zero conditional history. Aito returns honest low confidence, not a wrong high-confidence answer.

What still works on snowflake invoices

Different prediction targets depend on different signals. Not every field is vendor-locked:

| Field on a snowflake invoice | Predicted from | Useful confidence? |

|---|---|---|

| GL category | Description text + amount range | ✅ "Lunch meeting" → meals & entertainment |

| Approver | Employee / submitter | ✅ Their manager approves their expenses |

| Cost center | Project code or department | ✅ Tied to the person, not the vendor |

| VAT % | Amount + country + category | ✅ Not vendor-dependent |

| Specific GL account | Vendor history | ❌ Vendor-locked, falls to low confidence |

| Vendor master ID | Vendor history | ❌ Vendor-locked, falls to low confidence |

Smart Form Fill on a snowflake doesn't return blank fields. It returns field-level predictions with field-level confidence, with $why rendered so the reviewer sees what was used. Three or four of five fields auto-fill; the vendor-locked fields surface for human judgement.

What production actually looks like — two anchor points

Anchor 1 — recurring B2B AP ("highly regular"). A workload dominated by recurring supplier payments looks like:

- ~80%+ fully automated at the high-confidence threshold, realized error near zero.

- ~15-20% reviewed-with-prefill — mostly the medium-confidence tail.

- ~0-5% mostly manual — the actual one-off long tail.

Nordic enterprise AP deployments on this shape have run at 95%+ accuracy in production since 2018.

Anchor 2 — heavy snowflake tail (employee-expense-heavy AP, "highly diverse"). Splits roughly into thirds:

- ~1/3 fully automated. Top confidence bucket. Realized errors essentially zero — Aito's confidence is calibrated.

- ~1/3 reviewed but pre-filled. Mid-confidence across most fields. Reviewer confirms instead of types — 4-5× speedup over manual coding.

- ~1/3 partially filled, mostly manual. Snowflake invoices where vendor-locked fields are unknowns. Even here, GL category, approver, and cost center are usually pre-filled with

$whyrendered, so the human judges 1-2 fields, not all 5.

Both are real production observations. Most products serve a mix of tenant shapes and land between the two anchors.

Buyer diligence: run _evaluate per field

The single best 15-minute test is to run _evaluate on a held-out slice of your real data. Pay attention to two things:

- Per-field accuracy. Description-keyed fields (GL category) typically hit 90%+ even on diverse workloads; vendor-locked fields drop sharply on the snowflake tail.

- Per-confidence-bucket calibration. The top bucket should land at near-zero realized error if the model is well-calibrated. If it doesn't, your tiered-confidence UI plan won't work.

Your UI keys off field-level confidence per prediction: auto-fill the high-confidence fields, suggest the medium, route the low to human review with $why. Cold-start handles itself field by field, confidence-bucket by confidence-bucket — the first three observations of any stable pattern already produce auto-fill-quality confidence. See the Cold-Start Honesty use case for the full breakdown.

The drift ceiling, honestly

Per-field accuracy on the recurring core caps around 92-95% from drift (vendor account changes, tax-code shifts, org reorgs, personnel changes for person-keyed fields). Per-field accuracy on the snowflake tail caps lower; that ceiling is structural. Tenant-wide headline accuracy is the volume-weighted blend.

A predictive AP product covering employee-expense-heavy tenants will see lower headline accuracy than one serving recurring-B2B tenants — that's the data, not the model. Both numbers are correct, and both are useful, as long as your UI is field-level confidence-aware.

The Override → Learn Loop (Why You Don't Need MLOps)

User changes a predicted GL from 6200 to 6210 at 14:00:23. The next request that hits a similar invoice — different invoice, same vendor, same description — reflects the new pattern in its conditional probability. No retrain queue. No model deploy. No A/B holdout.

The override IS the training signal because there is no separate model file. Aito stores rows; predictions are computed from rows at query time. Write the override row, the next prediction reads it.

| In a typical ML pipeline | In a predictive database |

|---|---|

| User correction → label store | User correction → INSERT |

| Nightly retraining job | (none) |

| Model artifact + deploy | (none) |

| Canary, holdout, regression monitoring | (none) |

| 24-72 hours from correction → live | <1 second |

Two consequences that matter to a product team:

- Multi-tenant by default. Same code path serves 1 tenant or 1,000 tenants. Different tenants live different realities; same query returns different conditional probabilities.

- Compliance is simpler. "Right to explanation" → return

$whyfrom the prediction. "Right to be forgotten" → DELETE the rows. There's no model artifact derived from user data sitting in a separate registry.

Try Live Invoice Processing Predictions

Run the same predictions used by accounting.aito.ai (and by production AP deployments behind RPA robots) directly from this page. These queries hit our hosted demo database — no signup, no setup.

Interactive Accounting Predictions

Run live queries against accounting.aito.ai's demo database — 255 customer companies, 128K invoices, predictions scoped per-tenant via customer_id

GL Code (Telia, CUST-0000)

Predict the GL code for a Telia Finland Oyj invoice belonging to customer CUST-0000

{

"from": "invoices",

"where": {

"customer_id": "CUST-0000",

"vendor": "Telia Finland Oyj",

"amount": 890.5

},

"predict": "gl_code",

"select": [

"$p",

"feature",

"$why"

]

}Prove It on Your Data in 15 Minutes

The fastest way to disqualify Aito for your use case is to run _evaluate on a sample of your real invoices.

- Export 1,000-10,000 invoices from your ERP as CSV (vendor, amount, description, GL code, approver, anything else you'd predict on).

- Upload to a free Aito sandbox.

- Run

_evaluateon a held-out slice. Get per-class accuracy, top-3 accuracy, confusion matrix, and a baseline-vs-Aito diff. - Compare to the always-predict-majority baseline and to whatever you have today.

If the lift over baseline is convincing, schedule a technical review. If not, you've spent an afternoon and saved a procurement cycle.

_evaluate is the same operator that powers the Quality Dashboard at accounting.aito.ai. Same code path you'd use in production to monitor accuracy per tenant.

How a Predictive Accounting Workflow Runs

Step 1: Capture & extract — Invoice arrives via OCR, e-invoicing, or manual entry. Vendor, amount, line items, dates land in your accounting database.

Step 2: Predict & explain — Your app calls Aito with the captured fields. Aito returns the predicted GL code, approver, cost centre, and payment terms — each with a probability, top-3 alternatives, and a $why decomposition that shows which input fields drove the prediction.

Step 3: Auto-apply or escalate — High-confidence predictions auto-fill and submit. Mid-confidence rows show the prediction but ask for confirmation. Low-confidence rows route to human review with the $why rendered as a tooltip.

Step 4: Learn from corrections — Every override goes back into the same Aito table. The next prediction reflects it instantly — no retraining, no model deployment.

Build vs. Buy: The Honest Math

If you're a 5-15 person product team building a predictive accounting SaaS, here's the realistic comparison:

Build it yourself. A working prediction loop with multi-tenancy, retraining, evaluation, and override capture takes ~6 months and ~1.5 FTEs (one ML engineer, ~0.5 backend engineer for plumbing). At €100-130K loaded ML salary plus infrastructure: year-1 TCO €200-400K, year-2 €120-200K maintenance. You get total control over the model internals.

Drop in Aito. API key and SQL-like queries get you to a working prediction loop in 1-2 weeks. Multi-tenancy is customer_id in where. Aito plans start at €0 (free sandbox), €75/mo (small production), €350/mo (growth), with custom enterprise tiers — see pricing. You give up some control over model internals; you gain ~5 months of engineering you didn't have to spend.

The breakeven question. If your product needs predictions as a feature, build-vs-buy is rarely the right framing — the question is time to first paying tenant. Six months later means six months less revenue, six months more burn, and a smaller dataset to learn from when you do ship.

How This Compares: Rossum, Hypatos, GPT-4 + a Vector DB

The three honest alternatives we hear most:

vs. Rossum / Hypatos / Klippa (invoice-extraction-as-a-service). They solve "extract structured fields from a PDF." Aito solves "given the structured fields, predict the next ones (GL code, approver, cost center) using your tenant's own history." They're complementary, not competitive — most predictive accounting products use one of these for OCR and Aito for downstream prediction.

vs. GPT-4 + a vector database. LLMs are great at one-shot reasoning over unstructured input; they're expensive, slow (~1-3 sec), opaque (no $why), and don't get smarter when a user corrects them. Aito returns conditional probability with confidence and explanation in 20-200ms. For volumetric AP work — thousands of invoices/day per tenant — the unit economics don't work with an LLM in the hot path.

vs. building it on scikit-learn / Vertex AI / SageMaker. You can. You'll spend 6 months on it (see Build vs. Buy above). The thing you're building is a worse version of a predictive database, with retraining infrastructure you have to operate.

The wedge: a predictive database is the substrate. OCR sits in front of it. LLMs sit beside it for the cases that need free-form reasoning. Aito does the part that's repetitive, volumetric, and per-tenant — which is the bulk of accounting work.

Drop-In Predictive Layer for an Existing ERP

If you're not building a new SaaS but augmenting an existing accounting/ERP install, Aito works as a prediction engine called via API. No migration, no rip-and-replace. Send invoice data to Aito, get back predictions with $why explanations. Your ERP applies high-confidence predictions automatically and flags the rest.

Works with any ERP that has an API — SAP, Oscar Software, Lemonsoft, NetSuite, or custom-built systems. Typical integration takes days, not months. See the ERP solutions page if you're building a new predictive ERP product rather than augmenting an existing one.

Proven in Production

Aito has run AP automation at 95%+ accuracy in Nordic enterprise production since 2018 — multiple SAP + UiPath + Aito stacks, thousands of invoices per month, the same _predict and $why decomposition exposed in this page. Predictive operators also ship inside RPA-partner products like Q-Automate, deployed at end-customers like Jokiväri.

Q-Automate × Jokiväri

Q-Automate (formerly Q-Factory) integrated Aito's predictive operators into their RPA product to automate purchase-invoice coding for Finnish home renovation company Jokiväri on Lemonsoft ERP. Q-Automate used Aito Evaluations to prove the automation potential against real invoice history in hours, then shipped the live robot.

Key Achievements:

"The purchase invoice robot is able to account for invoices almost independently using artificial intelligence."

Multi-Tenant SaaS Pattern

If you're building a predictive accounting product for multiple customers, the same Aito table serves every tenant — customer_id in the where clause scopes the conditional probability to that tenant's history. accounting.aito.ai runs 255 tenants this way (3 enterprise / 12 large / 48 midmarket / 192 small), one shared Aito DB, sub-100ms predictions.

{

"from": "invoices",

"where": { "customer_id": "CUST-0001", "vendor": "Telia Finland Oyj" },

"predict": "gl_code"

}

Same vendor, different customer_id returns a different prediction — because the conditional probability is computed only over that tenant's rows. No per-tenant index, no per-tenant model, no per-tenant retraining schedule.

Security, Compliance & Data Residency

- EU data residency by default. Aito is hosted in AWS Frankfurt (eu-central-1). US deployment available on request.

- Encryption. TLS 1.2+ in transit; AES-256 at rest.

- Tenant isolation. Per-customer Aito instances by default. Multi-tenant pattern (one Aito DB, many

customer_idvalues) is opt-in for products that want shared infrastructure. - DPA. GDPR-aligned Data Processing Agreement available on request.

- Audit logging. Every API call is logged with timestamp, key ID, query, and response time.

- Right to be forgotten. A predictive database has no model artifact derived from your data —

DELETEthe rows and the predictions reflect it from the next query forward.

We do not currently hold a SOC 2 Type II attestation; we share our security posture and pen-test results under NDA on request. If your procurement requires SOC 2 today, contact us — we work with several customers in regulated finance under DPA + security questionnaire.

Get Started

Ready to ship a predictive accounting product — or add predictive accounting to one you already have?

Try accounting.aito.ai → — see what a predictive accounting SaaS looks like end-to-end before you build one.

Read the source on GitHub → — Apache 2.0, 255-tenant reference architecture, 9 use-case guides.

Schedule a Technical Review → — walk through your specific accounting use cases with our team.

Start Free Trial → — point Aito at your own invoice data in our sandbox environment.

Why we built all of these on one substrate

Predictive Accounting, Predictive ERP, and Predictive E-commerce are not three separate products. They are three instances of the same pattern: a SaaS application designed around the premise that the user should never make a decision the data has already made for them. The substrate is a predictive database. Prediction becomes a query rather than a project.

For the full architectural argument, see The Predictive Application. The canonical explanation of why per-prediction cost determines what gets built, and what changes when that cost collapses.

Address

Episto Oy

Putouskuja 6 a 2

01600 Vantaa

Finland

VAT ID FI34337429